Project Info

Category

Date

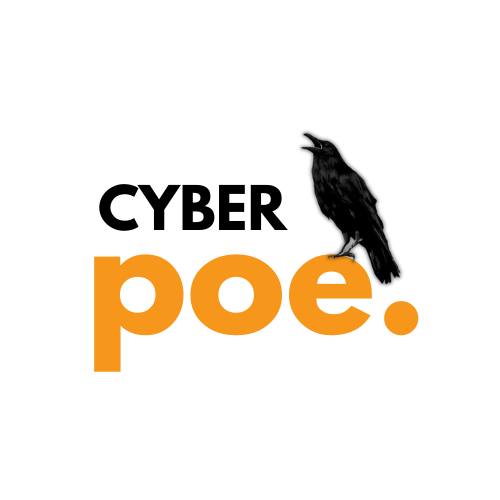

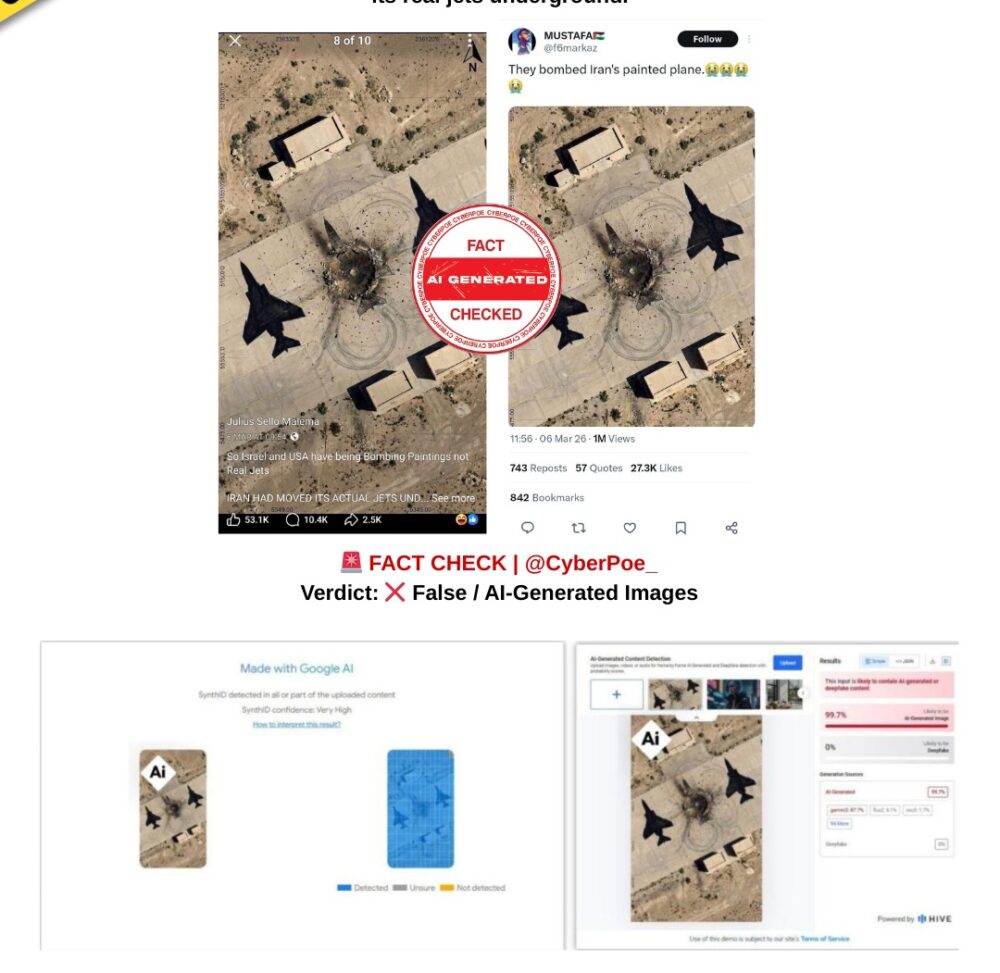

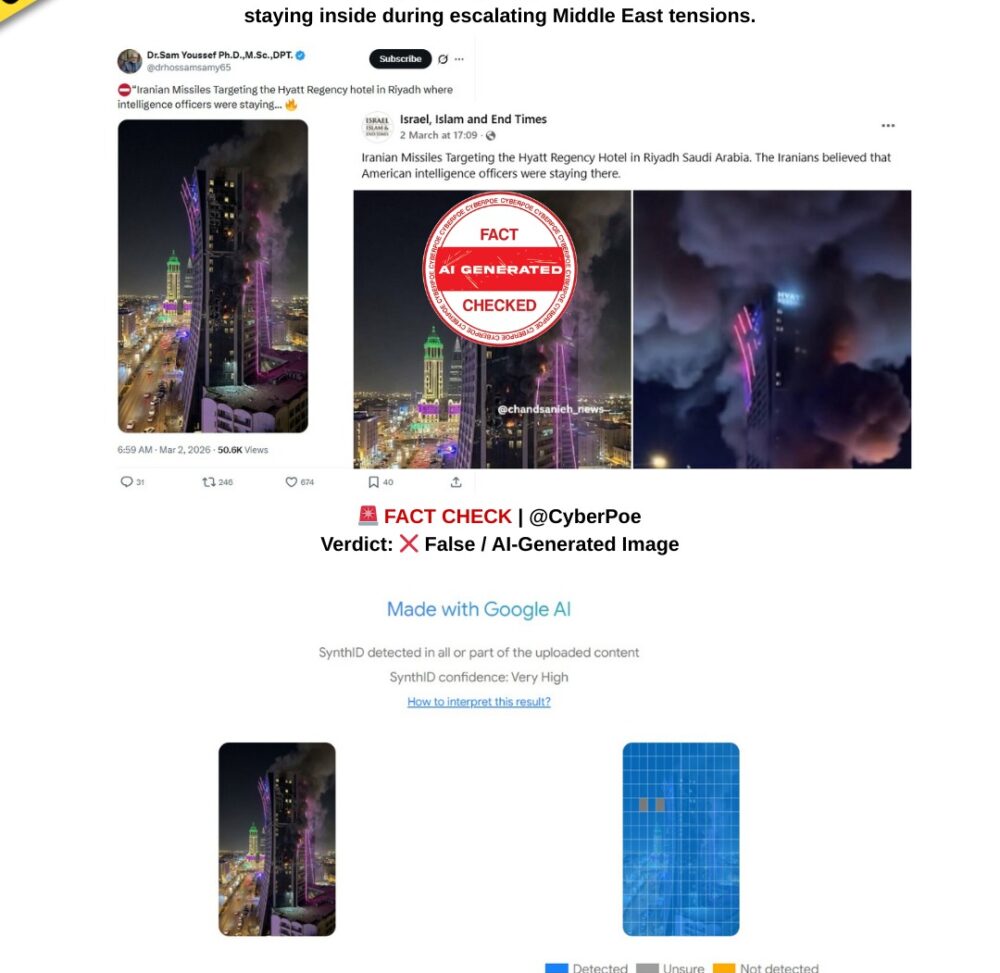

AI-Generated Image Falsely Claims to Show Khamenei’s Body Beneath Rubble

The Claim

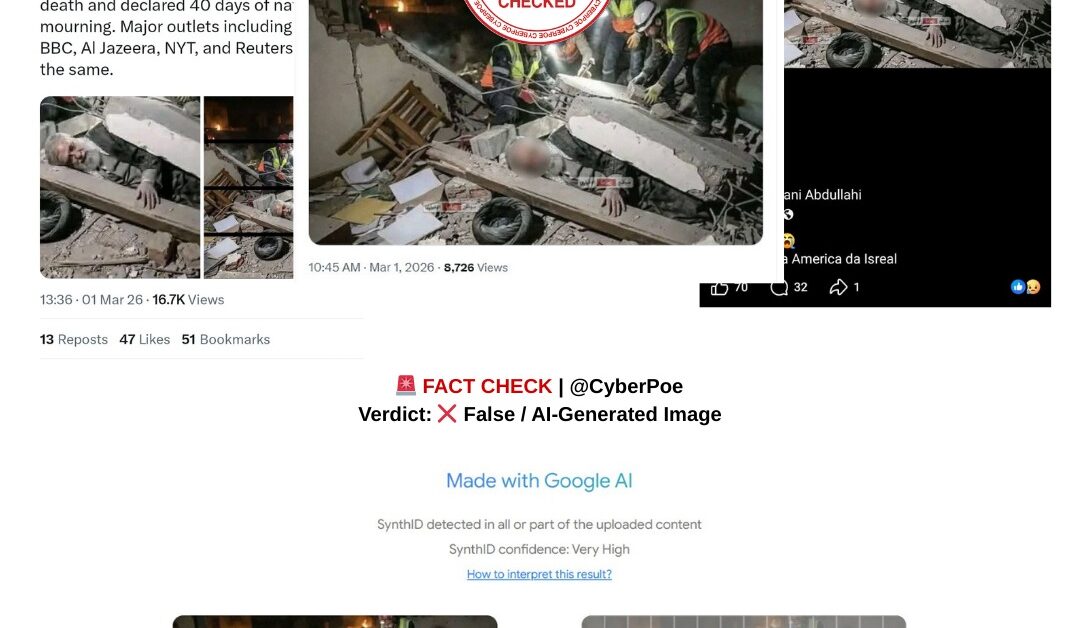

An image circulating widely on social media including X,[1] Facebook[2] and other platforms [3]claims to show Iranian Supreme Leader Ayatollah Ali Khamenei’s body trapped beneath rubble following alleged airstrikes carried out by the United States and Israel. The picture depicts rescue workers searching through debris while what appears to be Khamenei’s body lies partially buried under collapsed structures.

Because of the sensitive geopolitical context surrounding Iran and the global attention on any developments involving its leadership, the image spread rapidly across multiple platforms. Many users shared it as if it were a real photograph documenting the aftermath of a strike and confirming reports of Khamenei’s death.[4]

What CyberPoe Verified

Technical analysis indicates that the viral image was generated using artificial intelligence rather than captured by a real camera. The visual was examined using Google’s SynthID detection system,[1] a tool designed to identify content created by Google’s generative AI models.

SynthID works by scanning images for invisible digital watermarks embedded during the AI image generation process. These markers cannot be seen by the human eye but can be detected through specialized verification tools.

Two different versions of the image circulating online were analyzed using the detection system. In both cases, the results returned “very high confidence” that the image had been produced using Google’s AI technology.

This strongly indicates that the picture was artificially generated and does not document a real event.

Why AI Images Spread During Breaking News

AI-generated images are increasingly appearing during major geopolitical developments, particularly those involving war, political leaders, or major crises. Because these images can look highly realistic, they are often mistaken for authentic photographs when shared without verification.

During fast-moving news cycles, dramatic visuals especially those showing alleged deaths, attacks, or destruction can spread rapidly before their authenticity is examined. This creates opportunities for synthetic images to influence public perception even when they have no factual basis.

As generative AI tools become more advanced, verifying the origin, metadata, and digital fingerprints of viral images has become essential to prevent misinformation from spreading unchecked.

CyberPoe Verdict

❌ False / AI-Generated Image

The image claiming to show Ayatollah Ali Khamenei’s body trapped beneath rubble is synthetic. Technical analysis using Google’s SynthID system found strong evidence that the image was created using AI rather than photographed at a real-world event.

This case highlights how AI-generated visuals can rapidly circulate during geopolitical crises, underscoring the importance of verifying viral content before treating it as evidence. 🌍

CyberPoe | The Anti-Propaganda Frontline 🌍